Heya,

Holy guacamole I did something! Who knows what this fix breaks but I couldn’t find any posts regarding this error on here yet so I thought I’d put is up for those who follow. When publishing a .bgeo cache from Houdini I get the following error:

Error

DEBUG: Response <RestApiResponse [200]>

ERROR: !!! Creating of hero version failed. Previous hero version maybe lost some data!

Traceback (most recent call last):

File "C:\Users\stephen.scollay\AppData\Local\Ynput\AYON\dependency_packages\ayon_2401161802_windows.zip\dependencies\pyblish\plugin.py", line 527, in __explicit_process

runner(*args)

File "C:\Users\stephen.scollay\AppData\Local\Ynput\AYON\addons\openpype_3.18.7\openpype\plugins\publish\integrate_hero_version.py", line 96, in process

self.integrate_instance(

File "C:\Users\stephen.scollay\AppData\Local\Ynput\AYON\addons\openpype_3.18.7\openpype\plugins\publish\integrate_hero_version.py", line 363, in integrate_instance

head, tail = _template_filled.split(frame_splitter)

ValueError: not enough values to unpack (expected 2, got 1)

I believe this is due to the hero file template within Ayon not containing a key for frame numbers such as those found in .bgeo sequences. To fix it I have changed the template for the default Hero file from this:

{project[code]}_{folder[name]}_{task[name]}_hero<_{comment}>.{ext}

to this:

{project[code]}_{folder[name]}_{task[name]}_hero<.{@frame}><_{comment}>.{ext}

This ads an optional field for the frame and allows it to save!

Stephen

1 Like

That indeed sounds like a bug in the hero template’s default.

I do wonder what happens, with hero versions, if the newer hero version has less frames. I’m pretty sure the old frames outside of that frame range are not deleted when publishing the new hero version - but maybe they are? (Not sure how the logic is written.)

Anyway, this definitely looks like a bug. @mustafa_jafar what do you think?

Huh, interesting point. This also depends on what frame range is treated as “truth”, the frames that exist on file (At which point what happens if there are two frame ranges for whatever reason), the shot frame range, or the task frame range (I will admit I don’t know why there are both).

These are ‘inherited’ downwards - but in the end the task frame ranges are the ground truth for your workfile since they are closer to your work area. You don’t technically need to set the task frame ranges explicitly, since those by default inherit from the parent folder.

However, for publishes files - the metadata of start frame and end frame of that particular publish should be published along. And that should always be the ground truth for the frame range of that version. However, if the loader loads with $F in Houdini and your hero version happens to have frames outside of that range from previous hero publishes that were longer, then Houdini will of course still pick those up. As said before, I’m not entirely sure whether all existing files are removed when publishing a new hero version - if it does, then it should already work nicely as is - but if not, that’d be a bug.

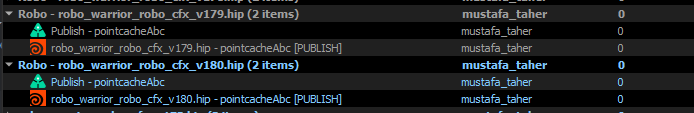

yes, I believe this is the case… Let met share one of my saved notes.

I hit an issue with Hero templates and Houdini because <.{@frame}> was missing.

This hero template works for me

{project[code]}_{folder[name]}_{task[name]}_hero<_{comment}><.{@frame}>.{ext}

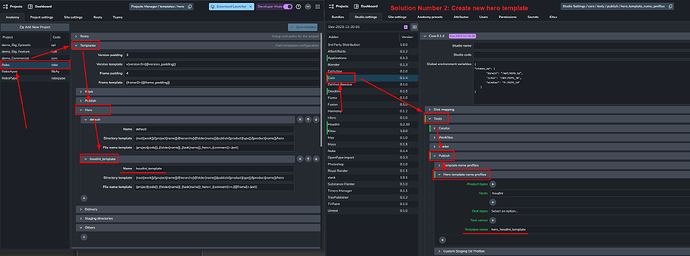

There are two possible solutions to use it:

- To update the default hero template as it doesn’t include frame be default.

- to create new hero template for houdini

Also, you will need to update your hero template name profiles in bundle settings.

you will need to add hero_ prefix when using custom hero template.

ayon+settings://core/tools/publish/hero_template_name_profiles

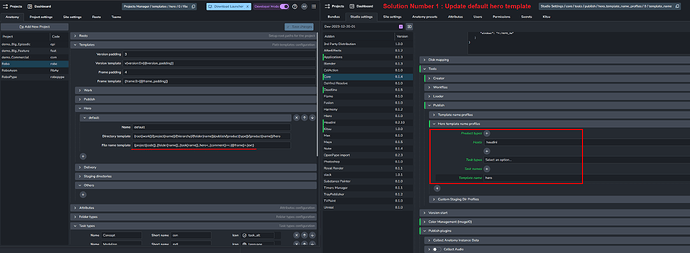

Solution 1

Solution 2

This shows that the default hero template value should be updated in settings to include the frame, right? So basically the current defaults are bad defaults.

Houdini didn’t like it.

I don’t know if it’s considered bad defaults as I have no idea how adding the optional <.{@frame}> token affect other DCCs.

I would assume that the publish and hero templates should be largely identical as Hero’s are just a direct copy of whatever the latest publish is right? So if publish templates accept it so should hero templates

Related to this same sort of issue (and I have not tested this btw but it appears to be true for renders) but I forsee an issue where an artist submits two versions of the same scene to be rendered. As workfile caches appear to not be versioned I presume that the newer cache would overwrite the old before publishing? Is there some way to prevent this (risky, but should it write directly to the publish location or something)?

Yup, that’s what I would expect - they should match.

Yes, the later will overwrite the first one.

This happens in Houdini farm publishing where I need to have one publish at a time.

So, I have to disable my old farm jobs.

I don’t know if that considered bad.

As far a I know, we can version the staged files to solve that problem but users can change the output file paths manually easily in the ROP node (unless some extractor plugin forces an output path just before rendering the rop)

1 Like